Vulnerability Management Process

Introduction

This document details how to execute the Vulnerability Management Process as documented in our Vulnerability Management Policy. The policy document is the source of truth in case there are any discrepancies between the two documents. If you find conflicting information please raise it to the attention of the Security team.

Vulnerability management process

- Discovery: A vulnerability from any source becomes an issue in the tracking board, in the Initial column.

- Triaging: a member of the security team triages the vulnerability. If confirmed, they write a technical report in the security-issues repo and engage the code owner. This happens within 3 business days.

- Engineering estimation: the code owner suggests a patch and provides an estimate of the effort to complete it within the SLA defined by the severity level assigned during the triage process.

- Remediation: the code owner patches the issue. Security verifies the patch.

- Disclosure: Security discloses the vulnerability according to our vulnerability disclosure process.

Vulnerability sources

We use a few tools to find vulnerabilities in our product, infrastructure and assets:

- Automated SAST/DAST: Trivy, Checkov

- Manual Testing

- Customer reports

- Internal reports

- Bug Bounty Program

We use the following to manage them and record information:

- Vulnerability board in GitHub: where we track the status of open vulnerabilities.

- security-issues repository: where we store technical write ups for vulnerabilities and discuss with Engineering teams.

Vulnerability reports

Our vulnerability reports are issues in the security-issues repository. There are a few issue templates to choose from when creating a new report. The important labels to be aware of are:

vulnerability-report: all vulnerability reports should have this label. The repository is also used for other means so this helps with filtering.severity/{critical/high/medium/low/info}: the severity of the issue.in-sla: reports that are still within the SLA.risk-accepted: reports where we have formally accepted the risk of the vulnerability.vulnerability-extended: reports where we have extended the SLA.not-prod: reports affecting code that is not production, for example the Handbook.

Vulnerability Board

We track all vulnerabilities in the Tracked Vulns GitHub board. We’ll continue to automate and improve this board so that we can save time while properly tracking metrics for this process.

The board consists of issues of type Vulnerability in columns that represent different stages of this process. It’s important to move the issues to the appropriate columns asap because that affects metrics. Here are the columns (from left to right) and when an issue should be in it:

- Incoming: before the vulnerability is triaged.

- Needs triaging: while the Security engineer is triaging and creates the report is security-issues.

- Pending estimation: developers acknowledge/confirm reception of the report and are estimating the time to fix the vulnerability.

- Pending fix: when we already have the estimation but the Engineering has not sent a patch yet.

- Validating: when the Security team is engaged to verify the patch. It’s common to have back-and-forth at this stage.

- Disclosing: while we are preparing the disclosure(if needed).

- Risk accepted: issues that are not fixed and the team owning the affected code/asset assumes or accepts the risk.

1. Discovery

As our security program grows we will continue to add tooling and processes that find vulnerabilities across our systems and code.

Any vulnerability found from these sources becomes an issue of type Vulnerability in the Tracked Vulns GitHub board, in the Incoming column. The issue contains:

- Descriptive title

- Short description

- Source of vulnerability

- Penetration Testing

- Bug Bounty Program

- Vulnerability Scanning

- CI/CD pipeline security checks

- Internal responsible disclosure

- Public notification

- Customer notification

- Link to the technical report or source of the vulnerability

The goal is for this step to be fully automated and all vulnerabilities, from all sources, automagically showing up in the Incoming column. Until then, when needed to create a new issue use the Vulnerability issue template in the security-issues repository. 3. Fill out the details and press Create

Once the Security engineer picks up a vulnerability they should move it to the Triaging column.

2. Triaging

The Security team is responsible for triaging vulnerabilities, which includes:

- Confirming that it’s an exploitable issue

- Determining its impact in our systems

- Writing a technical report (when needed)

- Assigning a severity to the vulnerability

- Engaging with the code owner

Vulnerability reports are often false positives and we hope to prove we are not vulnerable. It’s important to not be biased by this and ensure all reports are thoroughly reviewed. The Security team will reply to the initial report and create the technical writeup within 3 days of being alerted.

Confirming vulnerabilities and determining impact

Receiving a vulnerability report doesn’t mean our application can be attacked because of it, especially when considering results from automated scanners. It’s our job to confirm what attacks are possible due to a vulnerability and its impact in our application.

When triaging vulnerabilities remember to test for more scenarios than were initially reported. For example trying an exploit with different access levels, deployment models, etc. It’s not unusual to receive a vulnerability report that can be expanded into more serious scenarios. It’s very important to follow up in these situations.

If the issue is considered not a vulnerability the process stops here. Each source of vulnerability has different procedures for closing a report as NOT AN ISSUE.

Writing the technical report

If the vulnerability is confirmed, the assigned Security Engineer must create a technical writeup in the security-issues repository. The code owner of the vulnerability is tagged so they can continue this process. The repository has a Vulnerability issue template that should be used. It contains sections and information to be filled out.

The technical report has multiple audiences:

- The engineer responsible for the vulnerability

- Other Security engineers who may need to follow up

- Product to help prioritize it

- Management

It’s important that the report has all technical details to allow the engineers to have one place with all the information about the vulnerability. It’s also important to provide a summary for anyone who doesn’t need that level of detail.

Make use of screenshots and/or videos to properly showcase the vulnerability.

The issue should have a label severity/{low|medium|high|critical}, depending on the severity.

Issue templates

As we continue to add new vulnerability scanners to our program we have seen the need to create more specific issue templated in the security-issues repository. If not dealing with a vulnerability from one of the scanners, default to using the Vulnerability template.

Assessing severity

One of the most important parts of this process is determining the severity of the vulnerability. Whether the vulnerability comes from bug bounty hunters who want lots of $$$ or automated tooling that lacks context, our Security team is best positioned to assess the real impact that a vulnerability has in our application or systems.

Our policy defines four severity levels: Critical, High, Medium and Low.

It’s important to be consistent when assigning severities. To properly assess severity, consider the impact of the vulnerability and the ease of exploiting it. Vulnerabilities that require an attack chain, privileged account or user interaction are more difficult to exploit than vulnerabilities that can be exploited unauthenticated.

Here is high-level guidance and examples:

- Critical: critical vulnerabilities are likely to be P0 or P1 incidents, meaning we are under attack or trying to confirm it. It’s an all-hand-on-deck situation where the vulnerability needs to be mitigated as soon as possible. It’s a combination of highest impact with easy exploitation. Examples of high impact: leaking of highly sensitive information (such as private code, payment information), service outages, widespread account takeovers, authorization bypasses (any user becoming a site-admin), RCE in our production pods, easily exploitable XSS (stored or one-click). These vulnerabilities do not require privileged accounts or user interaction for exploitation.

- High: high vulnerabilities could have the same impact as Critical ones but in a limited scope. These vulnerabilities are not as easily exploitable as Critical ones, either because of mitigating controls or requiring a specific setup or privileged account to work. Examples: RCE but requiring a site-admin account, account takeover of a specific account and not all accounts, one-click XSS affecting any user.

- Medium: vulnerabilities that by themselves do not lead to high impact but can be leveraged as part of an attack chain. Bypassing protections that serve as defense-in-depth and not SPOFs also falls under this category. Oftentimes it’s a high risk vulnerability that has a compensating control covering us. Examples: bypassing CSRF protections (there are layers of protection), open redirects, XSS with a complex attack chain.

- Low: bugs that can be used for leaking information that is not highly sensitive, or issues that cannot be chained into more serious attacks. Examples: leaking of organization membership, public repository information leak.

Why not just use CVSS? CVSS is a strict system to help categorize vulnerabilities across all systems, tech stacks and scenarios under the sun. As a result it does not allow us to be precise and add nuance to the assessment.

When to open an incident

While triaging a vulnerability it may be necessary to open an incident. Do so when all of the following applies:

- The vulnerability is High or Critical;

- We have confirmed or have strong reason to believe that we and/or our customers are affected;

- Customer data or application availability is compromised.

3. Engineering estimation

Once the technical write up is ready a code owner is engaged to provide an estimation of the effort and when it will be completed.

When possible the effort estimation should also include the inclusion of regression tests to ensure the issue is not re-introducted later by accident.

To identify who the code owner is, use a combination of git blame and looking up our organization chart.

Code owners are notified in two ways:

- Add a comment to the technical report, tagging the team:

- Write a message in the team’s Slack channel as a friendly heads up

The SLA for replying with an estimate depends on the severity:

- Critical: 1 day

- High: 3 days

- Medium: 10 days

- Low: 10 days

If the code owner cannot adhere to the SLAs they should request an Exception. Once they have provided this estimation the issue should be moved to the Pending Fix column.

4. Remediation

Once the Engineering team begins working on the patch the Security team is readily available to provide any consultation necessary.

When the patch is ready, the Security team will be engaged as reviewers to verify the patch. At this point move the issue to the Validating column. Whenever possible, it’s important to re-test the proof of concept on the patch and show proof (such as a screenshot) that the issue is fixed.

Once the issue is confirmed to have been fixed the code can be merged. The issue should be moved to the Disclosing column if it is to be disclosed or to the Resolved column if not.

The SLA for remediation depends on the severity:

- Critical: 3 day

- High: 10 days

- Medium: 40 days

- Low: 100 days

5. Disclosure

Once vulnerabilities are patched it is our responsibility to properly disclose them to our users and customers. We disclose vulnerabilities through GitHub security advisories, the CHANGELOG and (optionally) the Security Notifications mailing list.

Publishing GitHub Security advisories

We publish GitHub Security advisories in the repository containing the vulnerability, usually sourcegraph/sourcegraph. This is a recent example. To publish a security advisory:

- Create a draft advisory as per GitHub docs and fill out the fields

- Ecosystem: leave empty unless it’s for an npm or golang package. This is empty in the wide majority of cases.

- Package name: empty unless it’s a reusable package in an ecosystem, like an npm package. This is empty in the wide majority of cases.

- Affected versions: range of affected versions of Sourcegraph, for example

<3.30.1. Use<instead of<=where needed. - Patched versions: patched version, for example

3.30.1. - Severity: the severity of the vulnerability. Don’t use the CVSS calculator unless the calculated severity matches our initial severity rating.

- CWE: find the closest CWE in this list. If in doubt, use more generic CWEs such as

CWE-20: Improper Input Validation. - CVE Identifier:

Request CVE ID later. - Title: use a descriptive title and err on the side of being extra verbose. These titles are used in tooling across the industry and it’s best to not leave much room for interpretation.

- Description: follow the GitHub template and include thorough details. If the vulnerability was reported by a security researcher, ensure to credit them in the advisory description.

- Get feedback from the team on the advisory.

- Publish the security advisory as per GitHub docs and request a CVE ID.

CHANGELOG entries

Write a short entry in the CHANGELOG such as Patched low risk information disclosure. More details in the [security advisory](link to advisory).

Notifying the Security Notifications mailing list

When a new security update is published on GitHub we have the option of sending out a notification email to our Subscription- Sourcegraph Security Notifications list. This is not an onbligation and should be reserved for high-impact vulnerabilities. To send new emails to the mailing list:

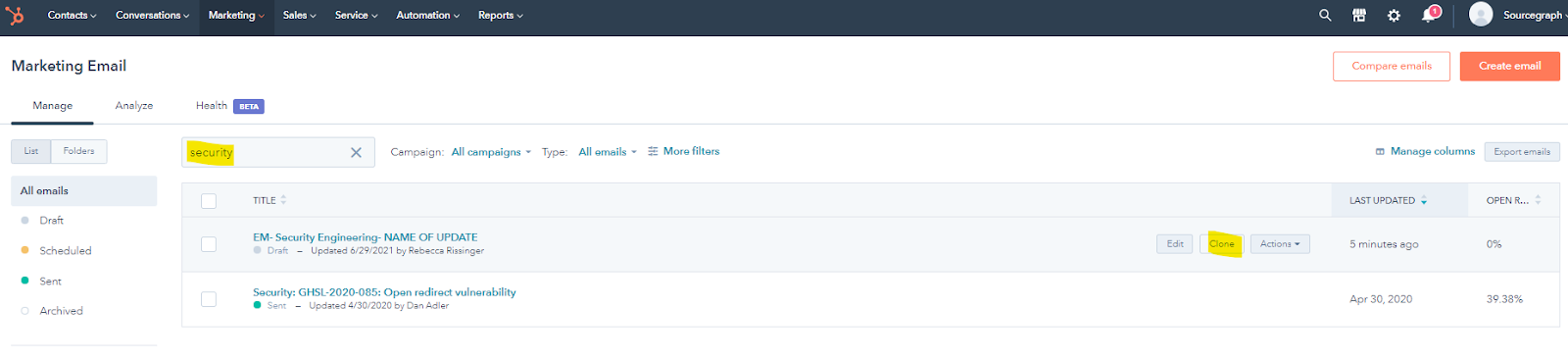

- Log into Hubspot, head over to the Marketing tab and select

Emailfrom the dropdown. - Once on the Email section of Hubspot, search for “security” and it should pull up a previously sent security update.

- Hover over the email and click on

Clone:

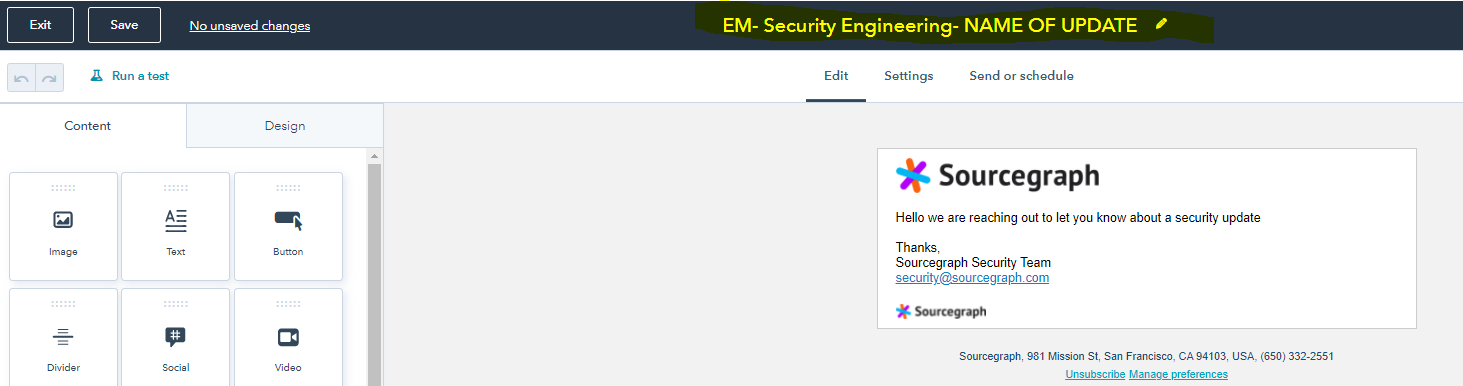

- On the Edit tab:

- Update the internal name of the email so that it has the name of the update included in the name (highlighted below), including the CVE-ID

- Update the email body contents to match the below template. Replace

${SEVERITY}with the appropriate value and linksecurity advisoryto the published GitHub advisory.

Hello,

We are reaching out to inform you of a recently disclosed ${SEVERITY} severity vulnerability in Sourcegraph.

For more details please see our security advisory[link].

Best regards,

Sourcegraph Security Team

- On the Setting tab:

- From name:

Sourcegraph Security Team - From address:

security@sourcegraph.com - Subject Line:

Sourcegraph Security Update - CVE-{CVE-ID} - Preview text: Optional

- Internal name: same as step 4.

- Language:

English - Subscription type:

Sourcegraph Security Notifications & Updates - Office location:

Sourcegraph (default) - Campaign:

Security Team Update

- On the Send or schedule tab:

- Send to:

Subscription- Sourcegraph Security Notifications - Don’t send to:

Subscription- Marketing Email | Champions Communications, andLST EML | Staff, non-core, opted out

- Sending the notice (must be done within 5 days of the advisory being published):

- Post a message in

#demand-gen-internalrequesting to send out a Hubspot security email. Provide the below details:- A link to the completed email (be sure to confirm the above details first)

- When you want to send out the notice

- Any additonal details or special cases for this notice (ex. send only to a specific user base or domain)

6. Acceptance of vulnerabilities

There are vulnerabilities that we may decide to not fix. For example small vulnerabilities that enable features, or items where the cost to fix is too great.

An accepted vulnerability must be properly documented. Ensure the following:

- The GitHub issue is updated with

- Why the vulnerability cannot/should not be fixed.

- Any mitigating factors.

- Effort and work required to actually put in a fix.

- A

risk-acceptedlabel.

- The issue is moved to the

Risk Acceptedcolumn.

A member of the Security Engineering team will assess the risk acceptance request and engage the appropriate risk acceptance owner based on the risk level as defined in the Policy.

7. Exceptions

We understand that situations may arise where one or more of the parties involved cannot comply with their responsibilities and/or SLAs. If that’s the case, the code owner should inform the Security team of why they can’t adhere to the SLA and when they will be able to patch it. The Security team is responsible for accepting these exceptions. An exception should be logged and contains the following information:

- System(s) affected

- Vulnerability

- How the vulnerability was discovered

- Why it can’t be fixed within the SLA

- Document any compensating controls and/or situations that reduce the risk

- How long the exception is requested for

The exception approval is also logged in the same application where the request is logged. The exception approver depends on the severity of the vulnerability, as defined in the Policy.

8. Product Security Patching

Security fixes for vulnerabilities are included in our regularly scheduled releases - please see our latest change log for details on included fixes. Managed instances are upgraded each release cycle and patched when applicable as part of our managed service.

Once a vulnerability has been fixed depending on the severity and impact it may require a dedicated patch release. Vulnerabilities meeting the below criteria will follow this process:

- The vulnerability is High or Critical;

- We have confirmed customers are affected / are at risk with out a patch;

- The vulnerability is likely to be exploited.

Steps:

- (if managed instances are affected) Add a comment to the technical report in the security-issues repository tagging the @sourcegraph/delivery team requesting an upgrade for managed instances. Provide the following details:

- Affected product version;

- Affected instances;

- A link to the merged PR / commits resolving the issue;

- A brief summary of the impact.

- Submit a Patch Release request.

- Once the patch release is complete engage the Customer Engineering team to communicate to all affected customers. Managed instance customers must be informed that their enviroments have been patched. On prem customer communications should specify a clear call to action for upgrading. Communications will include the following:

- CVE Identifier;

- Vulnerability Severity;

- Description of the patched vulnerability (including it’s impact to unpatched deployments);

- Affected product versions;

- Patched product version;

- Patch release links (changelog, GitHub release, release batch change);

- Patched applied date (for managed instances);